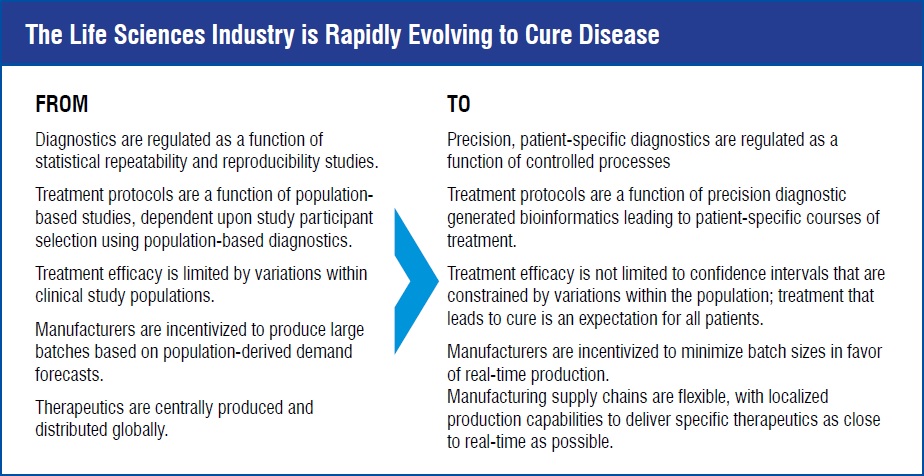

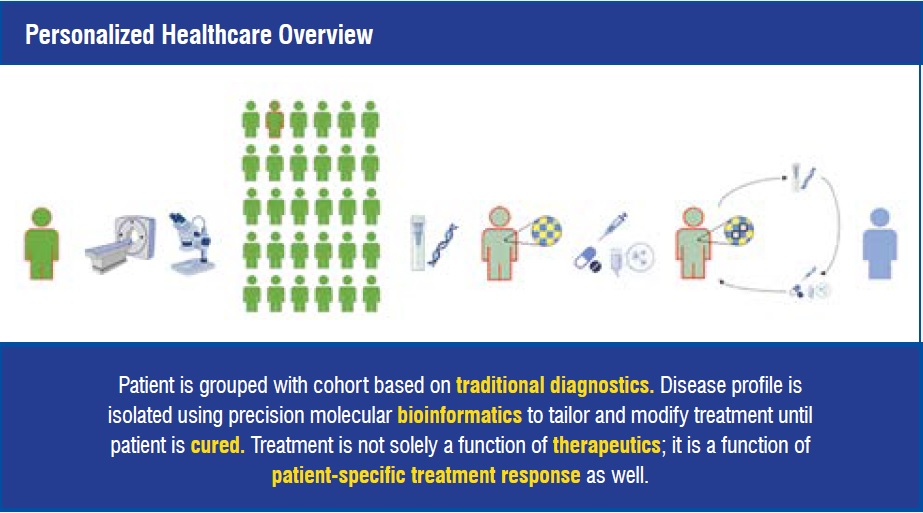

In 1998, Genentech received regulatory approval for Herceptin, a monoclonal antibody targeting HER2 positive breast cancer, marking the beginning of the modern personalised healthcare revolution. What made Herceptin different? It was the first adjuvant therapy targeted at a subset of a population that was identified using molecular diagnostics to ensure that only patients who could benefit from the treatment would receive the treatment. Today, use of companion diagnostics and targeted therapies is widespread and healthcare providers are beginning to leverage molecular residual disease (MRD) diagnostics to adapt and tailor each patient’s treatment in pursuit of curing disease. The personalised healthcare revolution has arrived, and the life sciences industry needs to adapt by accelerating new product introduction and technology transfer for manufacturing facilities, enabling contextualised real-time data capture, and developing integration and operations analysis tools that enable real-time release for GMP products.

Personalised healthcare is characterised by providing a unique, empirically informed treatment regimen for each patient, based on each individual patient’s expressed disease characteristics and how the patient’s disease responds to treatment.

The focus of Emerson Life Sciences is to address digital technology challenges in order to enable the personalised healthcare revolution. These challenges include:

1. Accelerating new product introduction and technology transfer for manufacturing facilities.

2. Driving real-time, end-to-end data capture with query-able context.

3. Enabling real-time release for GMP products.

For the life sciences industry, New Product Introduction (NPI) and Tech Transfer (TT) have been characterised by detailed project management involving collection of process definition information, production line configuration, deployment of process definition information into process controls, and validating that the facility functions as intended with respect to the product being introduced. This leads to resource-intensive and lengthy initiatives that inhibit delivery of novel, high-quality, life-saving treatments at reasonable cost. NPI and TT have both logical and physical requirements that need to be addressed to accelerate the personalised healthcare revolution.

Key to accelerating NPI and TT is having product and process definition information accessible from a single, shared source that spans the product lifecycle from product development to pilot-scale production to commercial production. Once product and process information has been consolidated into a shared platform, that information can be leveraged to determine facility fit and rapidly cascade the relevant process definition data to a receiving plant. Integrating and accelerating logical changeover is dependent upon receiving facilities having implemented local, parameterised control system configurations that align with equipment models shared between the enterprise process definition system and the plant process control system.

With product and process definitions consolidated, and equipment models aligned, one final NPI and TT challenge to overcome is rapid changeover and implementation of new equipment. We typically refer to this concept as ‘plug-n-play.’ Pragmatically, this requires standardisation of control system hardware components, interchangeable controller configuration packets, and electronic marshalling. In response to these challenges, equipment manufacturers have begun partnering with control system suppliers, like Emerson, in order to make ‘plug-n-play’ a reality for life sciences manufacturing.

As detailed product and process definition data gets consolidated into single-sources-of-truth, and physical manufacturing equipment standardises on ‘plug-n-play’ capable controllers, the time and effort to complete New Product. Introduction and Tech Transfer will decrease in support of the needs of personalised healthcare manufacturing.

Electronic batch records (eBR) are widely adopted by the life sciences industry to minimise data entry errors, streamline compilation of production records, and enable review by exception to reduce time and effort required for product release. Manufacturing Execution System (MES) is a core enabling technology for eBR, and it works in concert with Enterprise Resource Planning (ERP) and Process Control Systems (PCS) to orchestrate production activities, enforce controls, and capture relevant data. These systems have significantly improved operations management activities related to production records. Implementation of eBR is typically dependent upon complex integration of application suites across the digital landscape which leads to data replication, application-specific interfaces, and limited reporting and analysis capabilities.

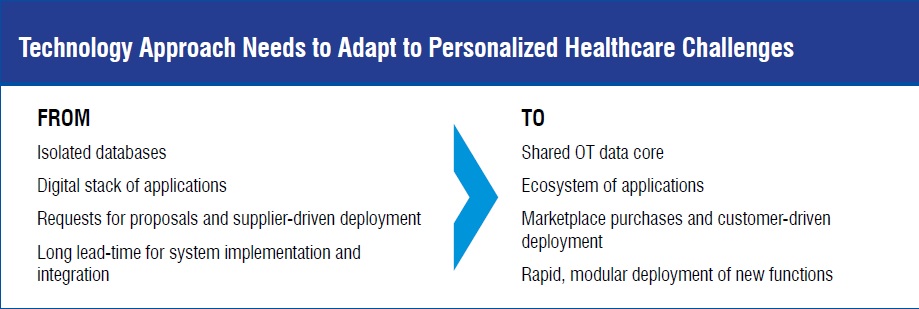

Smaller batch sizes, more frequent product changeovers, increased traceability requirements, and shorter product lead times are exposing limitations of the traditional MES-based eBR approach. Implementing an Operations Technology (OT) Data Core as the single source of truth for execution data provides a new information management foundation upon which to build the next generation of process control and production records. The OT Data Core must not only meet regulatory data integrity requirements, it must also be able to facilitate platform-agnostic data sharing with query-able context.

The Data Core needs to be able to support many data types and data sources while providing context-driven storage and accessibility. This technology differs from traditional data historians which are typically dependent upon time, sequence (or series), and source identity (or instrument tag) for organisation and retrieval. OT information needs to be readily accessible by many systems and users with simple yet specific data identification to facilitate retrieval and usage.

To make the most of a Data Core, operations management activities need to be orchestrated by functionally specific applications that conform to Data Core context standards so that data can be published and shared across many users and use cases. A key consideration when developing and documenting manufacturing processes will be to understand which data inputs and outputs are shared across many platforms, use cases, or users. This data set represents the beginning definition of scope for the Data Core contents and informs user requirements for the Data Core. The OT Data Core facilitates pursuit of a ‘single-source of truth’ for execution data. Additionally, aligning functional applications to the Data Core enables flexibility and rapid scalability for the manufacturing digital ecosystem.

Addressing the final challenge to agile, patientfocused, manufacturing supply chains requires addressing a primary cause of long product leadtime, product release. GMP product release involves thorough evaluation of production records that include evidence of compliance to approved process standards, quality test results, and deviation investigations and responses, to name a few. Many of these elements are addressed by eBR systems that include Review by Exception (RbE) functionality, but there are components that exist outside of traditional eBR implementations that contribute to lead time and effort.

One of the contributors to extended lead times is resolution of process deviations. Realtime identification, investigation, and resolution of process exceptions can readily be implemented for existing eBR systems with RbE through adoption of exception-handling specific functionality that triggers an immediate response from operations managers and quality assurance when exceptions occur. Addressing deviations in real-time requires a technological component that is connected directly to the manufacturing process to identify the need for quality action and record associated investigation and remediation activities. It also requires a behavioral norm within the manufacturing organisation to immediately respond, investigate, and resolve investigations. Having this real-time deviation response functionality embedded into the eBR system can help drive the desired organisational behaviour.

Another contributor to extended lead times is off-line product quality testing. Spectral Process Analytical Technology (PAT) is a rapidly evolving solution to evaluating product quality as a function of physical properties that can be measured with in-line instrumentation. When integrated with a control loop, PAT can be used to optimise production performance, enforce product quality, and predict deviation conditions before they occur. Process development teams are actively pursuing means to measure and record product quality attributes in-line, and PAT is a promising option in pursuit of real-time quality control.

With process data being digitally captured, contextualised, and evaluated in real-time, implementing a holistic, real-time product release platform is becoming a greater possibility for more life sciences manufacturing organisation.

The personalised healthcare revolution gives us the tools necessary to end treatable disease caused deaths in our lifetime. In order to enable this exciting new approach, our technological focus needs to shift from viewing specific medical devices or therapeutics as the value object of our processes to viewing each patient’s healing as the ultimate value proposition of an integrated process. To do this, industry needs a more sophisticated digital toolset that addresses real-time contextualised data capture, rapid product and process introduction for manufacturing, and real-time release of GMP products.