Artificial Strategy

How to think strategically about AI

Brian D Smith, Bocconi University, University of Hertfordshire and University College London

Only two things are certain about AI. First, it will be possible to invest infinite resources into it. Second, success depends on investing wisely, not foolishly. Consequently, your challenge is to think strategically about AI investment and not merely tactically. This article tells you how to do that.

As our industry stands on the verge of a revolution enabled by artificial intelligence (AI), the future belongs to those who can see a world that doesn’t yet exist. But, as Neils Bohr said, it’s dangerous to make predictions, especially about the future. We can’t know, with any precision or in any detail, how AI will play out in the life sciences industry. But nor can we afford the luxury of ignoring AI or taking a “wait and see” approach. Today, every industry executive is asking what AI will mean for our sector, how to use it to gain strategic advantage and, equally, how to avoid the strategic disadvantage by being left behind or placing the wrong bets.

Faced with this challenge, it is easy to become lost in the hype blizzard. This is true for any business but especially so for pharma, medtech and other life sciences sectors. In our industry, AI is only one change in the strategic environment among many others, from advanced therapies to the quest for health economic value. In this maelstrom of change, it is tempting to follow fashion but better to think independently. This article aims to help executives in our industry to rise above the short-term noise and think strategically about how they can use AI to achieve their ultimate goals. It begins with identifying the three fundamental premises before identifying the four reflections that life science leaders can use to guide their firms into the future.

Premise 1: AI is an Evolving, Heterogeneous Technology

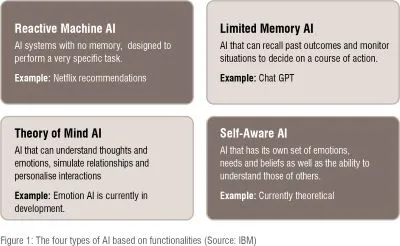

When we think about AI, we must begin by recognising that it is neither a homogenous nor static concept. AI is evolving rapidly and, like all new technologies, its youth is characterised by heterogeneity. At present, we can’t even agree about how to categorise different kinds of AI. At the very simplest, we talk of “weak” AI, like Siri and “strong” AI, which might in future approximate the cognitive abilities of the human brain, and “super” AI, which, if we ever achieve it, would surpass human capabilities. AI specialists bristle at this way of categorising by capability. IBM, for example, prefers to think of four different kinds of AI functionality, as shown in figure 1. This uncertainty and rapid evolution of AI means that, as we consider its impact, we must bear in mind what specific AI functionalities we’re thinking about. (Figure 1)

Premise 2: AI is General Purpose Technology

Although our industry is built on a continuous stream of new technological developments, most of them are narrow, specific technologies such as CRISPRCAS9 or, earlier, PCR. As impressive and important as these innovations are, their applications are mostly limited to biology and medicine. By contrast, AI falls into a different category, known as General Purpose Technology (GPT). This is a relatively select group of inventions that have the power to influence the whole economy, not just part of it. Historical examples of general purpose technologies include the steam engine, electricity and the internet.

Understanding that AI is a general purpose technology is useful because it allows us to draw two important parallels about how it will develop. First, like those earlier innovations, AI is likely to become pervasive. Much like electricity, it will not be restricted to industry niches. Second, it is not the core technology itself that will be important as much as its specialised applications,like electric motors, steam trains and the world wide web.

The general purpose nature of AI means that we can expect AI to become pervasive and ubiquitous, and, consequently, a strategic advantage will not arise simply from using AI. Instead, it is AI’s myriad specific applications that will become important, just as we saw with earlier GPTs.

Premise 3: AI Elevates Strategic Choices

As AI evolves into its many applications, it is reasonable to assume two things. First, there will be many more opportunities to spend money on AI applications than any company can afford. No company will be able to invest fully in every possible application of AI. Second, these many applications will vary not only in what they do but also in their relative costs, potential benefits and the probability of realising those benefits. So all companies, whether possessing huge or tiny AI investment resources, will find it appropriate to invest heavily in some applications, less in others and perhaps not at all in some. In other words, every company will have choices to make about how much and where to invest in AI. Equally, that investment calculus will vary idiosyncratically between companies, so that the right pattern of choices will be different for each company. Consequently, in a world where AI is everywhere, strategic advantage will lie with those companies that make the set of AI resource allocation decisions that is most appropriate to their individual context. Conversely, strategic disadvantage will await those who make choices that may be right for others but don’t align with their own characteristic context. To put it another way, the source of competitive advantage will lie in a firm’s processes for strategic decision making.

These three premises interweave to make the job of a life science leader harder and more important. Harder because they require choices about something that is evolving and varied. Harder because they can’t copy the choices of other firms who don’t share exactly the same context. More important because, as AI becomes central to competitiveness, the gains from good strategic choices and the losses from poor ones become more salient. To rise to this hard and important challenge, our industry’s leaders might reflect on a sequence of four questions.

What value will AI create?

Although AI will change much, there is a strategic fundamental that it will not change. Strategic advantage will continue to flow from being significantly better at creating value for the customer (in our industry’s case, some combination of payer, patient and healthcare professionals) than our competitors. Equally, there will remain only three ways of creating value: lower costs, better products or more closely tailored products and services. This will remain as true in the AI age as it was when Porter enunciated these three “generic strategies” in the 1980s.

Central to Porter’s idea was that being significantly better at anything demands expensive, limited resources. Consequently, few firms can be significantly better than their best rivals at all three things at once. Instead, firms succeed by choosing to be research-based innovators or low-cost generics or “customer intimates”. We see obvious examples of all three choices in the global life sciences industry. We also see the failure of firms that avoid that choice.

It follows that industry leaders must be disciplined about how they intend to create value and their strategic decisions about where to invest in AI must mirror that strategy. A research-led innovator must focus its AI investment into research and development. At the same time, it will have to limit its AI investments in manufacturing, supply chain and commercial to be merely adequate but no more. Cost leaders and customer intimates will have to make different choices that align to their different strategies.

Such strategic discipline won’t be as easy as it sounds. Every department will demand AI investment but effective industry leaders will resist the temptation to smear resources evenly across the entire value chain. They will decide what kind of superior value AI will help create—better products or better costs or better tailoring—and where AI will only maintain competitive parity. That decision will form a necessary framework for the set next of choices.

What capabilities will AI enable?

Porter’s generic strategies provide the first guide to alignment between AI investment and the rest of the firm’s focus. But it is only a rough guide because, within each of the three, there are many subsets of strategy. Within innovators, for example, these subsets include traditional blockbuster approaches, rare disease companies and advanced therapies supported by companion diagnostics. In my own research I've identified no less than 26 distinct business models in the life sciences industry.

This speciation of business models is important because whilst they share some capabilities, each is characterised by its own distinctive capabilities. For example, a primary care blockbuster model needs value demonstration capabilities in a way that a rare disease model does not. Similarly, some advanced therapy models in oncology require bioinformatics capabilities that are much less important to a branded generics model, which might rely on extracting insight from purchasing behaviours. The business-model-specific capabilities are also affected by the firm’s other strategic choices. For example, innovative firms differ in which research and development activities are in-house and which are outsourced, what technologies to pursue and what therapy areas to address. All of the choices influence what capabilities are most important to the firm’s competitiveness.

It follows that strategic investment in AI will have the most impact where it enhances those capabilities that are critical to, and characteristic of, the firm’s business model. To invest in outsourced activities or those capabilities that are not characteristic of the firm’s business model would risk under-resourcing the capabilities that most strongly influence its competitiveness. In practice, any life science business model requires a wide range of capabilities but, whilst all have to be at least adequate, only a few of them need to be distinctively superior (relative to the competitors) to ensure the success of the business model. Identifying these few would guide senior leaders to a more precise choice of AI investments. For instance, different innovator firms might allocate resources differently between AI for protein folding, for clinical trials data or for real-world value analysis, depending on exactly what their capabilities were critical to their business model’s effectiveness.

In essence then, appropriate decisions about AI depend on deciding what capabilities are the most important to the firm’s model. This again is difficult when no capability is unnecessary and every individual or department regards the capabilities they foster as the most important. But effective leaders will be able to separate essential but not distinguishing capabilities from those that are both essential and distinguishing. Having used that categorisation to make specific AI investment decisions, they will be faced with the task of implementing them.

How will you shape the J?

To adapt a Churchillian quote, the choice of what AI to invest in is not the end, or even the beginning of the end, but it is perhaps the end of the beginning. Industry executives must then make those decisions pay off. The difficulty of this is captured in what is known as the productivity paradox, observed during the 1980s’ introduction of information technology to business. At that time, productivity was seen to decline as firms computerised. As Nobel laureate Robert Solow observed in 1987: “You can see the computer age everywhere but in the productivity statistics”. As we now know, this phenomenon did not persist and, eventually, productivity rose rapidly. This pattern of investment leading to decreased productivity for a period, followed by rapidly increasing productivity, was christened the productivity J-curve by Erik Brynjolfsson and his colleagues at the Stanford Institute of AI.

The explanation of the J curve can be found in the parallels with earlier GPTs. The impact of GPTs doesn’t follow a linear path because they often require the adaptation of other parts of the economy to become fully effective, as we saw with the laying of railway lines, the installation of the electric grid and the development of fast internet connectivity. This leads to a short-term dip in economic productivity before the growth created by the innovation is felt. The length and depth of this dip depends on how well the economy or an organisation adapts around the GPT. If that adaptation involves simply replacing old methods with upgraded versions of the same methods and processes, productivity growth is less and longer delayed than if newer, better methods and processes are adopted. For example, the full benefits of both steam and electric power were not achieved by augmenting the existing water mills and small carriages but by building large factories, with big, centralised power stations and long trains pulled by dedicated steam locomotives.

Applying the lessons of the J curve to the life sciences industry implies that its leaders reflect carefully on how to adapt their organisations to fully realise the benefits of AI. Minor adaptations of, for example, clinical trials to improve patient selection and recruitment, may produce a longer, deeper productivity dip than transforming trials with so called “digital twins”. In the same way, applying AI to analyse real world cost and outcome data may produce a shorter, shallower J shaped curve than using AI to improve existing health economic models based on limited trial data.

For life science leaders, the productivity paradox and the J shaped curve mean that their strategic decisions are not limited to AI and must include complementary capabilities, processes and activities that will enable a short, shallow J curve leading into a steep rise in productivity. These decisions will be hard to implement, especially in large companies with entrenched functional silos that regard information technology as a supporting, subordinate activity. But effective leaders will recognise capabilities and processes as cross-functional. They will make investments and changes across functional boundaries to achieve the goals of the business rather than the objectives of departments. Having done so, they might expect to successfully implement AI. But they will still need to avoid the Turing Trap.

How will we avoid the Turing Trap?

Uniquely, the life sciences industry exists because of its implicit contract with society. Although rarely discussed, this arrangement has been in place for decades and has been enormously beneficial for both the industry and wider society. Under the unspoken terms of this social contract, society funds basic research and provides the highly educated personnel the industry requires. It also creates markets for its innovative but expensive products, through public and subsidised private insurance systems. Importantly, it also creates temporary market exclusivity, through intellectual property rights and regulatory conditions, which enables necessary return on investment. In return for these accommodations, the industry undertakes to, and mostly does, provide innovative products that extend, improve and save lives and that, eventually, commoditise into a stream of good and inexpensive generic products. This arrangement also has valuable by-products of many wellpaid jobs and a strong contribution to the overall economy.

This social contract exists only with society’s agreement. It is vulnerable to how the industry is perceived by the electorate and how governments respond to those views. If, as we anticipate, AI has a large impact on the industry, then we would be naïve not to anticipate unintended consequences that might threaten this essential but fragile arrangement.

The most obvious risk is “Turing’s Trap”, another concept of Erik Brynjolfsson. Simplified and translated into the industry’s socially contracted context, the Turing Trap says that if AI is simply used to replace people, cut costs and improve profits and these benefits are not shared equitably between society and the industry, then society may decide the contract is no longer a fair one. If, for example, AI makes new medicines and technology better and cheaper to make but no less expensive to buy, society may begin to question the contract. And if AI reduces effective competition, allows exclusivity to be extended indefinitely and destroys skilled jobs, then society, through policy and legislation, may decide not to fulfil its part of the social contract. That would be an unintended consequence of huge, negative significance for both the industry and society.

Given the importance of the social contract to the industry, the Turing Trap is an existential threat not only to individual companies but to the industry. It will not be easy for profit-driven, shareholder owned, companies to balance the distribution of the benefits of AI. But effective industry leaders will recognise that the long term survival of the industry requires them to avoid the trap and not place the social contract at risk. They will avoid AI’s unintended consequences by sharing its benefits with society.

Simple, Clear, Wrong

Our industry is living in interesting times. As if the dramatic changes in its scientific and sociological environments were not challenging enough, its leaders and senior executives must anticipate and respond to the impact of AI. The industry’s media and consultants are keen to offer clear, simple answers. But as HL Mencken famously said, every complex problem has an answer that is clear, simple and wrong. The challenge for our industry is to avoid simplistic thinking and to think strategically about AI. To do this, it helps to begin with the three premises in this article. AI is evolving and heterogeneous. It will be pervasive in its applications. It will demand strategic choices. Those responsible for making strategic choices about AI will make better decisions if they reflect on the four questions in this article. Good choices will align AI investment with core strategy. They will enable the capabilities that most distinguish the firm’s business model. They will shape the J curve and limit the productivity paradox. They will avoid the Turing Trap. If they reflect this way, they will do what they must do regarding AI: they will think and then behave strategically.