LEARN FROM YOUR METRICS

Using Operational Data to Improve Operations in Pharmaceutical Manufacturing and QC Labs

Matteo Bernasconi, Research Associate, University of St.Gallen

Gian-Andri Steiger, Research Associate, University of St.Gallen

Marten Ritz, Post-doctoral Researcher, University of St.Gallen

Thomas Friedli, Director, Institute of Technology Management

Operational excellence (OPEX) is the philosophy summarising numerous technical and social practices aiming at improving operations. However, the complexity of the practices and multiplicity of the initiatives hinders to always identify and set the most promising priorities. Being able to take decisions based on data and measured situations can improve the efficacy of the strategic initiatives. The St. Gallen manufacturing and quality control (QC) benchmarking programmes support pharmaceutical companies with a rich base of information needed to inform their decision making processes.

The importance of jointly analysing the manufacturing facility’s maturity and performance was outlined in the article The Link between Plant Performance & Maturity – Seeing the whole picture. The St. Gallen Model for OPEX depicts the synergies between technical & social sub-systems (Friedli & Bellm, 2013) and serves as backbone of the OPEX benchmarking, which has been supporting pharmaceutical companies in identifying potentials for improvement since 15 years. As discussed in the article St. Gallen OPEX Benchmarking for Pharmaceutical Manufacturing Sites – Measure Yourself Against the Best but Do It Right, the benchmarking helps to support continuous improvement in manufacturing. However, to advance in the lean journey, an end-to-end consideration of the value chain is required to systematically identify bottlenecks (Ritz, 2022). In the case of pharmaceutical industry, QC can strongly affect operations (Barbarite & Maslaton, 2008; Friedli et al., 2018)) and QC labs have been moved under the spotlight form the FDA in the recent years (FDA, 2016, 2022; Friedli et al., 2019). The QC lab benchmarking, which was presented in the article Benchmarking Pharmaceutical Quality Control Labs – Holistically assess Operational Excellence in Pharmaceutical Companies, supports firms in improving lab processes to systematically progress in operations along the entire value chain.

This article discusses two practical examples of how data driven improvements can be initiated and derived from a benchmarking. Firstly, an example from the manufacturing and OPEX benchmarking is presented. Secondly, a project that emerged based on QC lab benchmarking results is illustrated and discussed. Finally, the benefits of data driven decision are highlighted.

Data-based identification of improvement potentials: An example in the area of pharmaceutical manufacturing

The possibility of identifying the right levers to improve, based on a holistically comparison against peers, is a key element to define and start strategic improvement initiatives. As a balanced approach for performance and maturity calculation, the OPEX benchmarking report provides the basis to make a meaningful comparison and define the next steps. In the general procedure, a benchmarking can be further enriched by a structured result workshop, in which the operations team receives further insights from the results and guidance to act on the results. In the case of multiple facilities participating in the assessment, the sites are compared, and the network intern-learnings are highlighted and discussed. This facilitates the recognition of site-specific capabilities and the knowledge sharing within the manufacturing network.

The result workshop provides the possibility to better understand the link between plant maturity and performance, by deep diving into the individual maturity items and key performance indicators. For example, during a benchmarking we observed a situation of high Total Productive Maintenance (TPM) maturity but low TPM outcome performance across all participating facilities of the production network. The low TPM performance was mainly driven by a low level of Overall Equipment Effectiveness (OEE).

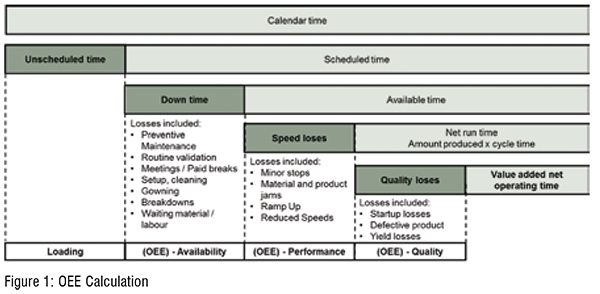

The link between good maintenance practices and good quality is well established in manufacturing literature (Cua et al., 2001; McKone et al., 2001), nevertheless some very quality oriented industries, such as pharma, have still good potential in improving OEE (Friedli et al., 2013). Setting the focus on TPM maturity and performance, in this case, was very relevant because of two reasons. On the one hand, TPM is the first pillar on which companies should concentrate to progress in achieving OPEX. In fact, TPM practices guarantee a stable equipment which is fundamental to allow for stable and reliable processes before finally reducing inventories to increase efficiency. On the other hand, all participating sites form this companies were suffering from the same conditions irrespective of the manufactured types of products. The systematic OEE performance problem across the manufacturing network provides the ideal setting for a corporate initiative targeting the issue not only in one plant but across the sites and therefore contributing to the stability of the equipment in the whole organisation (Figure 1).

The OEE is composed as a sum of three elements: OEE availability, OEE performance and OEE quality (see Figure 1). The OEE availability represents the percentage of the time that the equipment has operated over the scheduled time. The OEE performance denotes the amount produced by the ideal cycle time over the available time in which the equipment was producing. The OEE quality shows the percentages of outputs without defects as percentage of the overall inputs.

The St. Gallen team analysed the situation and identified that the low OEE was mainly driven by high down times. These can be divided in two categories: planned (setup & cleaning) and unplanned (breakdown, etc.). Thanks to the workshop, the team was able to deep dive into the maturity of the TPM practices and link them back to the low performance due to downtimes. Analyses revealed that unplanned downtimes were not the main driver of the low OEE, the setup & cleaning was the cause. TPM practices showed a high maturity in the category of housekeeping but a very low maturity in setup time reduction. Thus, the low performance in OEE (especially setup & cleaning) can be improved by focusing on the blank spots of the maturity and better training as well as better scheduling of the setup times. The workshop provided the unique opportunity to take a closer look to the results, investigate the cause-and-effect relationship of the low performance findings, and discuss the most suitable initiatives to target the problem. One initiative, for example, is focusing on improving the whole planning/ scheduling.

Data to action: How benchmarking data allows the derivation of improvement actions in the field of quality control labs.

Data-driven improvement actions are not solely a topic in the field of manufacturing. Also St. Gallen’s dedicated Quality Control (QC) Benchmarking is intensively used for the systematic definition of improvement strategies. In the following a practical example, how lab specific and company-wide improvement actions may be derived, will be presented.

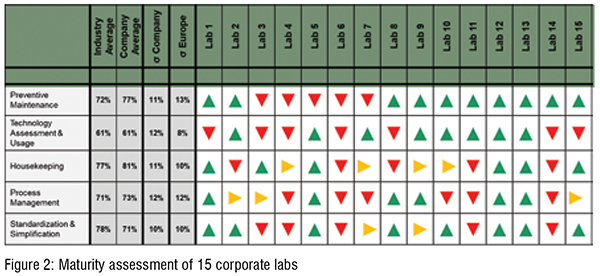

In the presented case, the QC lab benchmarking was individually conducted for 15 different sites of the company. The overall performance based on the sites’ KPIs showed significant differences among the various QC labs within the corporation. Besides the actual performance the labs’ maturity levels were assessed using specific enabler questions in addition. Those questions aim at assessing how advanced the respective process structures and management approaches in the QC labs are. All enablers are scientifically derived and proven to have a direct impact on the labs’ overall outcome performance levels. The enabler questions are structured in three main categories; namely the Maintenance & Quality System, the Planning & Steering System and the Management System. All three categories are again divided in total 13 subcategories. Due to their proven positive effect on performance, the enablers act as a starting point and toolbox for defining particular improvement initiatives. Further information about the QC benchmarking approach can be found in article in issue 47 / 2022.

The maturity levels of the 15 sites reflected the variability in the respective performance levels (cf. Figure 2). Especially the sub-categories Housekeeping, Process Management and Standardisation & Simplification showed a significant variability in maturity, while having an overall high degree of maturity on the corporate level. This indicated that certain successful practices already exist in those fields among some labs, while other QC labs have a significant improvement potential in the same field (Figure 2).

A subsequent project has been initiated to systematically identify successful practices that already exist in the lab’s network and at facilitating the corresponding knowledge transfer among all labs. Thereby, one strives for an optimal use of existing improvement opportunities.

When analysing the benchmarking data, it became apparent that one of the major challenges for QC labs is the complexity they face. Large variations in the number of different tests to be performed and fluctuating test volumes inhibit the standardisation of lab layouts and the associated processes. Furthermore, the labs face the problem of limited available floor space. Having this challenge in mind, five labs were selected for the further analysis. The labs were selected according to their maturity levels. Three labs with a comparably high maturity and two with a rather low level of maturity had been selected for further on-site investigation and documentation of the status quo. The idea behind that selection was to enable a knowledge transfer from advanced to less advanced labs and consequently make effective use of existing successful practices which then should result in an increase of the overall maturity and performance on a corporate level.

Another field of action that had been identified during the QC benchmarking was the site’s handling of quality events. Especially in the share of CAPAs overdue and reoccurring deviations, mayor differences had been observed. Consequently, the event handling process was analysed on both, the corporate and site level. The overall event handling process was defined in detail in a global standard operating procedure. When examining the local translation and implementation of that global SOP, two distinct bottlenecks had been identified. This was the initial impact assessment (IIA) and the root cause investigation (RCI).

For the initial impact assessment for example, a hard deadline of three days was defined by the global SOP. This deadline did not account for local specifics of the different sites. In dedicated site interviews it was noticed that depending on the complexity of the different sites, the three days are not sufficient for a profound IIA. In this respect, two phenomena had been observed. Firstly, in some labs IIAs had been performed on rather superficial level. This resulted then regularly in rather ineffective RCIs and CAPAs, considerably increasing the share of reoccurring deviations. Secondly, some other labs tended to perform a profound IIA, which often resulted in overdues in complex sites. This resulted consequently in backlog and started a vicious cycle that leads to growing shares of CAPAs overdue.

Having this results in mind, a more flexible setup of local guidelines was advised that allows the consideration of a site’s complexity in implementing global standards. The set IIA deadline should be feasible for all sites. Otherwise, there is an inherent risk of evasive operational behaviour with negative consequences such as the previously mentioned increase in reoccurring deviations and CAPAs overdue.

These examples show how patterns in benchmarking data can be analysed, their root-causes be identified and consequently lead to effective and practical improvement actions.

Outlook

Data is the basis for all improvement initiatives. It provides one with a holistic picture of the status quo, allowing the identification of improvement potentials. The systematic identification, adaption and implementation of successful practices contributes significantly to a company’s journey towards operational excellence. Continuous improvement requires that deficiencies are made visible and the effectiveness of improvement actions is verifiable. This article thereby showed two distinct examples for how improvement initiatives may be built based on benchmarking data. St. Gallen’s holistic approach on measuring performance allows companies to define strategically improvement initiatives that lead to stable and long-lasting enhancement of a company’s performance.

In the next journal issue, the St. Gallen team will conclude their article series with a summary of the operational excellence research field in the context of the pharmaceutical industry and provides an outlook to futures trends and current research activities in that field.

Literature

Barbarite, J., & Maslaton, R. (2008) "Managing Efficiency in a Quality Organization Pharmaceutical Processing ". Bajaj, V.., & Reffell, B. (2008) "The Global State of Operational Excellence: Critical Challenges & Future Trends ". Cua, K. O., McKone, K. E., & Schroeder, R. G. (20012). "Relationship between Implementation of TQM, JIT, and TPM and Manufacturing Performance ". Journal of Operations Management, 19, pp. 675-694. FDA. (2015). "Submission of Quality Metrics Data – Guidance for Industry Draft". FDA. (2022). "Food and Drug Administration Quality Metrics Reporting Program; Establishment of a Public Docket; Request for Comments ".

Friedli, T., & Bellm, D. (2013). "OPEX: A definition ". In: Friedli, T., Basu, P., Bellm, D., & Werani, J. Leading Pharmaceutical Operational Excellence: Outstanding Practices and Cases. Berlin: Springer; 2013. pp. 7-26.

Friedli, T., Köhler, S., Macuvele, J., Eich, S., Ritz, M., Basu, P., & Calnan, N. (2019). "FDA Quality Metrics Research: 3 rd Year Report". Friedli, T., Lembke, N., Schneider, U., & Gütter, S. (2013). "The Current State of Operational Excellence Implementation: 10 Years of Benchmarking ". In: Friedli, T., Basu, P., Bellm, D., & Werani, J. Leading Pharmaceutical Operational Excellence: Outstanding Practices and Cases. Berlin: Springer; 2013. pp. 35-58.

McKone, K:E., Schroeder, R.G., & Cua, K. O. (2001). "The Impact of Total Productive Practices on Manufacturing Performance ". Journal of Operations Management, 19, pp. 39-58

Ritz, M. (2022). "Operational Excellence across Manufacturing and Quality Control – A guideline to Avoid Optimizing Silos in Pharmaceutical Prodcution ". Dissertation, University of St.Gallen